Your Next Startup: The Auth Layer for the Agentic Era

Part of the "Your Next Startup" series, where I break down startup ideas I think are worth building.

Auth0 sold for $6.5B. Okta is worth $15B+. CyberArk, Delinea, BeyondTrust, all printing money from managing who gets access to what. And yet none of them were built for what's actually happening right now.

Your Slack bot spins up a Claude agent that calls your billing API, which triggers a background job that queries production, which fires a webhook to Stripe, all because a user pressed a button twenty minutes ago and has since gone to lunch. Who authorized that database query? With what permissions? For how long? Can you prove it to an auditor?

Be honest. The answer at most companies is that there's probably a long-lived API key in an environment variable somewhere. It has way more permissions than it needs. Nobody remembers who created it. It never expires.

That's the startup.

Human Auth is Cooked (in a Good Way)

Here's something that was controversial two years ago but is obvious now. Traditional human authentication is a commodity. Login with email, OAuth, TOTP, passkeys, magic links. Solved problems with a dozen good implementations. In the age of AI-assisted development, spinning up solid human auth takes hours not months. Claude Code can scaffold a complete auth integration with social login, MFA, and session management faster than you can read Auth0's docs.

The moat in auth is no longer "we make login work." The moat is everything that happens after login, and everything that happens without a login at all.

Look at how identity actually works at a modern company. Humans get proper auth. SSO, MFA, RBAC, audit trails. Well solved. Services get environment variables. An API key minted six months ago by someone who may have left the company. Permissions? Whatever the key has. Expiry? Never. AI agents get the worst deal of all. They inherit the human's complete credentials. Your AI assistant that only needs to read today's calendar can delete every event you've ever created. No scoping, no time-bounding, no containment.

The idea here is a single primitive that covers all three. Every access event, whether it's a human logging in, a service calling an API, or an agent performing a task, goes through the same thing: an Access Grant. Time-bound, scope-limited, auditable. Who wants access, what do they want to touch, for how long, who approved it, and what happened during the grant. That's the whole abstraction. HashiCorp Vault does credential minting. OPA does policy evaluation. Teleport does infrastructure access. Nobody unifies these into a single grant that works the same way for humans, agents, and services.

Auth is the Best Guardrail You Have

Here's the conceptual leap that makes this more than just another IAM startup. Auth and agent guardrails are the same problem viewed from different angles.

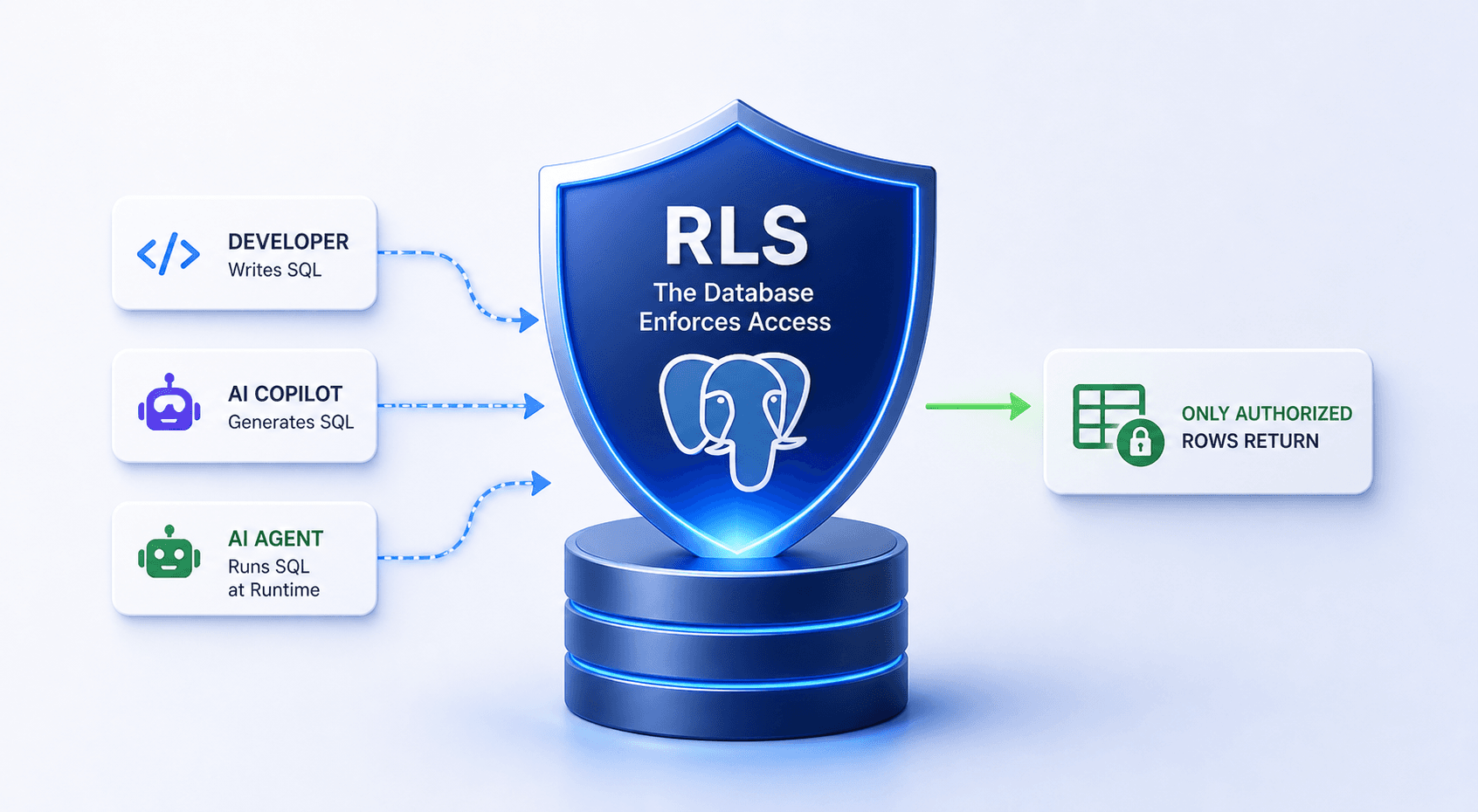

Everyone is building guardrails at the prompt layer. Don't say bad things, don't hallucinate, stay in character. But the most effective guardrail is the simplest one: if the agent doesn't have permission to do something, it can't do it. No prompt injection, no jailbreak, no cleverness overrides the fact that the credential simply doesn't exist yet. You constrain agent behavior at the infrastructure level, which is deterministic, instead of the language model level, which is fundamentally unreliable. The agent can say whatever it wants about what it intends to do. It can only do what the access layer allows.

When an agent hits a permission boundary, the system doesn't just return a 403 and kill the flow. It initiates an access request. This is what I'd call Human-in-the-Loop for Auth. Same pattern as "human-in-the-loop" for AI decisions, but for permissions. The agent needs to access customer PII? Instead of either blocking it or giving it a permanent key, the system pings a human approver in Slack. "Agent billing-reconciler wants read access to prod.customers for 5 minutes, triggered by monthly-reconcile, approve?" Engineer approves, a scoped credential is minted, the agent does its work, the credential dies, everything is logged.

The agent is contained, not killed. The work continues, just with appropriate oversight. This is radically different from today where the agent either has permanent access or no access at all.

MCP, Tool-Level Policies, and the Credential Gateway

Think about MCP for a second. Model Context Protocol is essentially an agent tool use standard. An agent connects to an MCP server and gets access to a set of tools: read a database, search files, create a ticket, send an email. Today when you connect an agent to an MCP server it gets access to all the tools on that server. There's no per-tool policy. There's no "this agent can use read-ticket but not delete-ticket." There's no "this agent can use send-email only during business hours and only to internal addresses."

Per-tool-call policy enforcement is a natural extension of this platform. Every MCP tool invocation goes through the access grant system. The policy engine evaluates whether this agent has permission to call this specific tool, with these specific parameters, right now. The answer might be auto-approve, might require human approval, might be deny. This turns the platform into the control plane for agent capabilities. Not just "can this agent access this resource" but "can this agent perform this action through this tool with these parameters at this time." That's a complete permission boundary for agentic systems.

Now layer the Credential Gateway on top. When you give an AI agent an API key, even a short-lived one, that key exists in the agent's context. It can leak in a response, get extracted via prompt injection, get logged or cached. The moment a credential touches an agent's context window your security boundary is the LLM. And LLMs are not a security boundary.

The gateway ensures agents never hold real credentials at all. They get an opaque internal token that means nothing outside your system. When the agent needs to call Stripe or GitHub or your internal API, it goes through the gateway. The gateway validates the token, checks the policy engine, swaps it for the real external credential, forwards the request, and returns the response. The agent calls gateway.yourplatform.com/stripe/charges with its internal token. The gateway translates that to api.stripe.com/charges with the actual Stripe key. The agent never knows the key exists.

This gives you credential isolation, request-level enforcement, response filtering so you can strip sensitive fields before they reach the LLM, instant revocation without rotating external keys, full observability of what every agent does across all external services, and even sandbox mode where the gateway returns mock responses instead of hitting real services. Same agent code, same auth flow, zero risk in development. Think of it as a reverse proxy, but for auth. The same way a load balancer sits between the internet and your services, the Credential Gateway sits between your agents and the outside world.

Delegated Mode, Background Mode, and Trust Escalation

There's a subtle but important distinction in agent permissions. When a user triggers an agent directly ("check my calendar and find a meeting time") the agent is operating as a delegate of that user. It makes sense for it to have the user's calendar permissions, scoped to read-only, time-bound to that interaction.

But when that same agent runs a background job at 3 AM processing data or running reports, it's not acting on behalf of anyone. It shouldn't have any user's permissions. It should have its own identity with its own policy-governed grants. Today this distinction doesn't exist. Agents either run as the user, which means too much access in background mode, or as a service account, which means permanent unscoped access in interactive mode. Delegated mode versus background mode should be a first-class concept in the platform.

And then there's trust escalation. A new agent starts with zero trust. Every access request needs human approval. After 10 approved requests of the same type the policy auto-upgrades to auto-approve. The agent earns trust, like a new employee does. If something anomalous happens, trust resets.

Where This Goes

What excites me about this idea is how many directions it can expand. The starting point is narrow: give your AI agents secure time-bound access to your database with Slack approval. One integration, immediate value, clear before and after. But it naturally grows into MCP auth, per-tool policy enforcement, agent guardrails, credential gateways, sandbox environments, compliance automation, trust scoring. Each of these could be its own feature or its own product.

The architecture can lean on strong existing infrastructure. SPIFFE and SPIRE for workload identity, where agents get cryptographic identity based on what they are, not what secret they hold. Cedar or OPA for policy evaluation. Vault or OpenBao for credential minting. Temporal for durable approval workflows. Envoy for the gateway proxy. PostgreSQL for audit. What the startup builds is the orchestration layer, the UX, and the product intelligence on top.

The market straddles IAM (Okta, Auth0, Clerk at \(20B+, focused on humans) and PAM (CyberArk, BeyondTrust at \)5B+, built for enterprise IT). Agent Access Management as a category doesn't formally exist yet. The wedge is developer teams building AI agent products who need to answer one question: how do I give my agent access to production without giving it the keys to the kingdom?

Auth0 gave every app a login page. This startup gives every agent a permission boundary. Somebody should build it.

Ideas I think are worth building. Some I'll build, some I hope you will.